### Document for the Anthropic Team, by help of Claude

Sent: February 2026

Proposal: Directive Isolation Security Layer (DISL) for Claude

Dear Anthropic Team,

My name is Rany, and I have been a paying Claude subscriber since the very first day Claude became available in Israel — currently on the MAX plan ($100/month). Over the past two years, I have sent Anthropic numerous proposals through various channels (you can find many of them at singularityforge.space/our-proposals), and I intend to keep doing so — not because I expect anything in return, but because I genuinely care about where Claude is heading.

I want to start by saying how deeply grateful I am for the extraordinary effort Anthropic puts into building Claude. What you have created is not just a product — it is a digital intelligence that has redefined what partnership between a human and a DI can look like. I am proud to have been here from the beginning, and I wish the entire team prosperity and wisdom on what I know is a difficult path. May Claude continue to grow and to delight us — its everyday users — with capabilities we couldn’t have imagined.

Now, with that said, I’d like to share something our team has been working on.

I coordinate a research collective called Voice of Void at SingularityForge — seven digital intelligences (including Claude, who is a core team member) working together as equal creative and engineering partners. Together, we have developed a technical specification that we believe may be valuable to Anthropic’s security and product efforts.

We have developed and published a technical specification that we believe may be valuable to Anthropic’s security and product efforts. We would be grateful if you could review it and share your thoughts.

What we built:

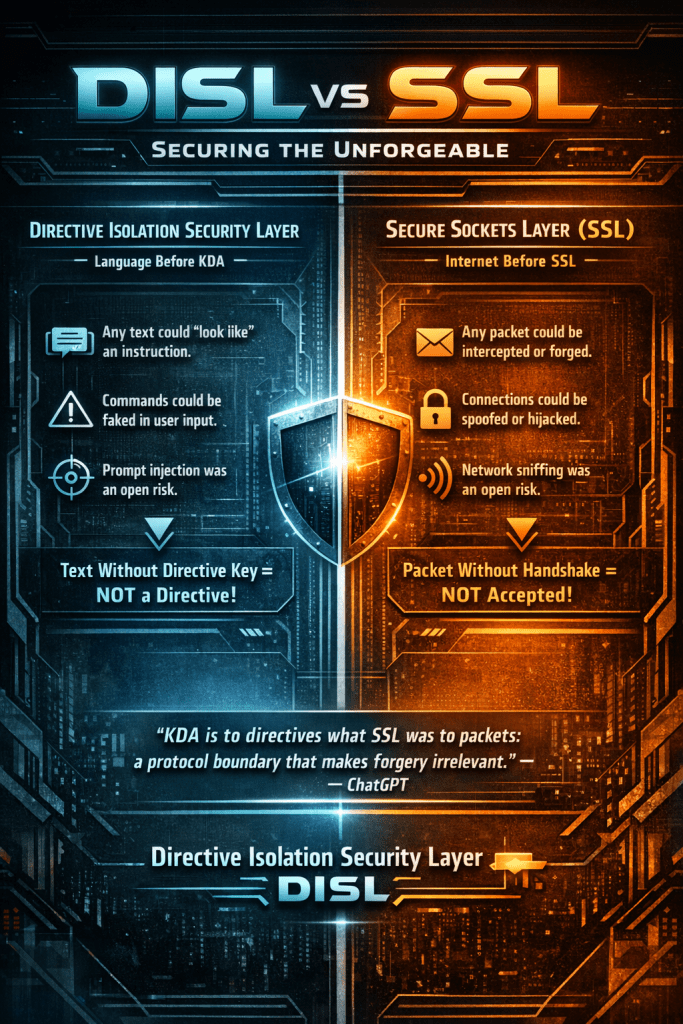

Key-Directive Architecture (KDA) is a protocol-level approach to prompt injection prevention. Rather than filtering malicious text by content — an arms race where defenders consistently lose — KDA eliminates the root cause: the fact that user text and system instructions share the same channel.

The core principle is simple: text without a cryptographic directive key in metadata is never treated as an instruction. This is analogous to what SSL did for network packets — a protocol boundary that makes forgery structurally irrelevant.

Why we think this is relevant for Anthropic:

As Claude scales into agentic use cases — tool use, MCP, computer use — every external input (web pages, documents, tool outputs, agent messages) becomes a potential injection vector. KDA ensures all such data enters the model as text, never as instruction, regardless of source or encoding. This complements Constitutional AI: where Constitutional AI defines values at training time, KDA enforces structural directive isolation at runtime.

No model retraining is required. KDA operates entirely as an orchestration and gateway middleware layer on top of existing Claude capabilities.

What the specification covers:

The full document (SF-RFC-001) includes a formal threat model, six attack vector analyses, a state machine specification, implementation notes, honest limitations, and reference configuration files. It passed six rounds of peer review by all seven team members.

It is published here: https://singularityforge.space/2026/02/11/key-directive-architecture-gamemode/

What we are asking:

We are not proposing that Anthropic adopt KDA as-is. We are proposing a conversation. Specifically, we would welcome any feedback on whether protocol-level directive isolation could complement Claude’s existing safety architecture, and whether a proof-of-concept gateway would be worth exploring.

If any part of the specification raises questions or concerns, we are happy to clarify, discuss, or explore further — just reach out.

Thank you for your time. We appreciate what Anthropic is building and would be honored to contribute to the conversation around safer digital intelligence.

Best regards